|

|

GIFiply: Increasing the compressibility of GIF images

In part 1 of my Ph.D. research, I've developed a image processing

technique which increases the compressibility of images using

Lempel-Ziv based algorithms. One algorithm of particular

importance is the GIF image format which dominates data on the

web today. This approach can reduce data requirements by up to 40%

with virtually no visual loss. Further this approach can be combined

with existing GIF reduction techniques to yield images which are

as much as 60% smaller, yet still virtually indistinguishable

from the originals.

|

JPEG Blocking artifact removal

At high compression levels, the quantization of DCT coefficients

used by the JPEG image format results in "blocking" artifacts. I have developed

a method for reducing those artifacts by creating a new smooth basis which can

recover non-blocky image from the same quantized DCT coeffients.

|

|

|

Poxels: Probablistic Voxel Reconstruction

In this project I examined the problem of reconstructing a

voxel representation of 3D space from a series of 2D or 1D projections

of the space. We reconstruct the 3D space by optimizing over the probability

that each voxel is visible in each of the projections. An iterative

algorithm is used to find the optimal probability distibution which

jointly explains all the observed projections.

|

Lempel-Ziv differential coding (LZd)

I've developed a dictionary based universal compression

scheme which is able to use approximate string matches to compress

data such as sounds and images in which sequences of symbols are rarely

repeated exactly, but are often repeated approximately.

|

|

|

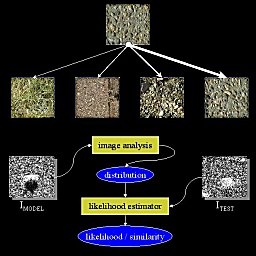

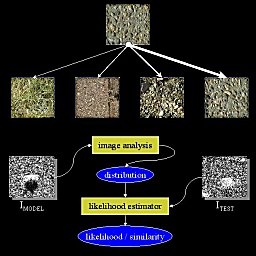

Distributions From Images

In this talk I describe a technique for modeling images by

attempting to approximate such distributions directly. These

approximations capture the texture characteristics within images,

using an image representation which measures the joint occurrence of

features across spatial resolutions.

An image classification system can be designed using a similarity

metric based on the likelihood that the distribution derived from one

image could have generated another. Classification of natural textures

indicates a high level of specificity, and recent results on target

detection in SAR imagery are encouraging.

|

Structure Driven Image Regisration

Because of its ability to provide a representation which is

generally robust to the speckle in synthetic aperatiure radar

(SAR) imagery, the flexible histograms texture matching technique

developed in my master's

thesis can be used as core matching metric for a SAR image

registration system.

While working on this project during the summer of 1997 at MIT and

Alphatech, Inc.

I developed such a system.

|

|

|

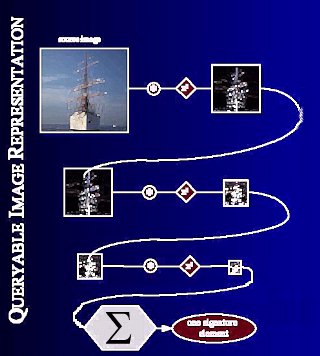

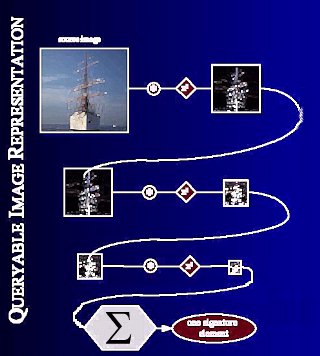

Queriable Image representations

I am currently working on techniques for automatic retrieval

from images databases. I developed a representation of images

which Uses a complex set of filter-networks to capture the

structure within a group of example images, and then use that to

find similar images in a database.

|

Texture Based Segmentation

By building up the flexible histograms texture matching

technique developed in my master's

thesis I have developed texture driven segmentation. Examples

include segmentation of target vehicles in synthetic aperature

radar (SAR), and anatomical structures from magnetic resonance

imagery (MRI)

|

|

|

Probabilistic Texture Synthesis

The textures within this web space were synthesized with a

multiresolution sampling procedure which I have developed. In

the first part of a two phase process, an orignal input texture is

analyzed to produce a probability density estimator for the 'true'

distribution from which it was generated. In the second phase, a

new texture is synthesized by sampling each spatial frequency

band from this density estimator. The final synthesized texture is

then created by combining these spatial frequency bands.

|

Multiple Person Tracking

In the

Human Computer Interface (HCI) project

at the

MIT Artificial Intelligence Laboratory

I have developed a system to track the locations of room occupants

simultaneously in 2 cameras and use that information to recover their 3D position.

This information is used by many HCI room functions. It is also directly used

within a tracking subsystem to direct mobile narrow-focus cameras to 'foviate' on

particular occupants, and determine which of several camera views provides the

most 'useful' view for recording.

|

|

|

Reconstructing Polyhedra From Hand-Drawn Sketches

I have built a system which can robustly

reconstruct the 3D structure of rectangular polyhedra from

haphazardly hand drawn sketches.

|

Human Visual Psychophysics

During my final year at Columbia

Engineering I became

interested in studying the human visual system as a means of learning

what kinds of mechanisms we employ which allow us to seemingly

effortlessly make sense of the visual world around us.

|

|

Page loaded on October 22, 2024 at 08:37 AM.

Page last modified on

2006-05-27

|

Copyright © 1997-2024, Jeremy S. De Bonet.

All rights reserved.

|

|